Note: This site is moving to KnowledgeJump.com. Please reset your bookmark.

Testing Instruments in Instructional Design

In this step, tests are constructed to evaluate the learner's mastery of the learning objectives. You might wonder why the tests are developed so soon in the design phase, instead of in the development phase after all of the training material has been built. In the past, tests were often the last items developed in an instructional program. This is fine, except that many of the tests were based on testing the instructional material, nice to include information, items not directed related to the learning objectives, etc.

The purpose of the test is to promote the development of the learner. It ascertains whether the desired performance change has occurred following the training activities. It performs this by evaluating the learner's ability to accomplish the Performance Objective. It also is a great way to provide feedback to both the learner and the instructor.

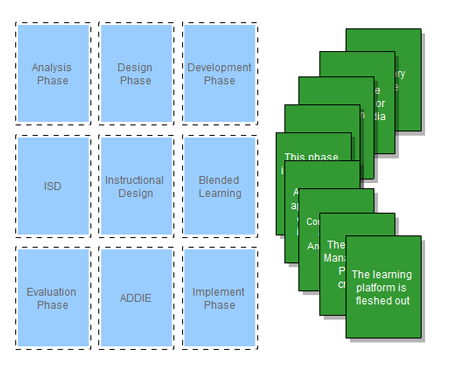

The Performance Objective should be a good simulation of the conditions, behaviors, and standards of the performance needed in the real world, hence the evaluation at the end of the instruction should match the objective. The methodology and contents of the learning program should directly support the performance and learning objectives. The instructional media should explain, demonstrate, and provide practice. Then, when students learn, they can perform on the test, meet the objective, and perform as they must in the real world. The diagram shown above, illustrates how it all flows together

Testing Terms

Tests are often referred to as evaluations or measurements. However, in order to avoid confusion, three terms will be defined:

- Test or Test Instrument: A systematic procedure for measuring a sample of an individual's behavior, such as multiple-choice, performance test, etc. (Brown, 1971)

- Evaluation: A systematic process for the collection and use of information from many sources to be applied in interpreting the results and in making value judgments and decisions. This collection of results or scores is normally used in the final analysis of whether a learner passes or fails. In a short course, the evaluation could consist of one test, while in a larger course the evaluation could consist of dozens of tests. In addition, it is the process of determining the value and effectiveness of a learning program, module, and course. (Wolansky, 1985)

- Measurement: The process employed to obtain a quantified representation of the degree to which a learner reflects a trait or behavior. This is one of the many scores that an individual may achieve on a test. An evaluator is most interested in the gap between a learner's score and the maximum score possible. If the testing instrument is true, then the learner did not master this area. (Wolansky, 1985)

Planning the Test

Before plunging directly into test item writing, a plan should be constructed. Without an advance plan, some test items may be over or under represented, while others may stay untouched. Often, it is easier to build test items on some topics than on others. These easier topics tend to get over-represented. It is also easier to build test items that require the recall of simple facts, rather than questions calling for critical evaluation, integration of different facts, or application of principles to new situations.

A good evaluation plan has a descriptive scheme that states what the learners may or may not do while taking the test. It includes behavioral objectives, content topics, the distribution of test items, and what the learner's test performance really means.

Types of Tests

There are several varieties of tests. The most commonly used in training programs are criterion-referenced Written Tests, Performance Tests, and Attitude Surveys. Although there are exceptions, normally one of the three types of test are given to test one of the three learning domains (Krathwohl, et al., 1964). Although most tasks requires the use of more than one learning domain, there is generally one that stands out. The dominant domain should be the focal point of one of the following evaluations:

- criterion-referenced Test: Evaluates the cognitive domain that includes the recall or recognition of facts, procedural patterns, and concepts that serve in the development of intellectual abilities and skills. The testing of these abilities and skills are often measured with a written test or a performance test. Note: A criterion-referenced evaluation focuses on how well a learner is performing in terms of a known standard or criterion. This differs from a norm-referenced evaluation which focuses on how well a learner performs in comparison with other learners or peers.

- Performance Test: Evaluates the psychomotor domain that involves physical movement, coordination, and use of the motor-skill areas. Measured in terms of speed, precision, distance, procedures, or techniques in execution. Can also be used to evaluate the cognitive domain. A performance test is also a criterion-referenced test if it measures against a set standard or criterion. A performance test that evaluates to see who can perform a task the quickest would be a norm-referenced performance test.

- Attitude Survey: Evaluates the affective domain that addresses the manner in which we deal with things emotionally, such as feelings, values, appreciation, enthusiasms, motivations, and attitudes. Attitudes are not observable; therefore, a representative behavior must be measured. For example, we cannot tell if a worker is motivated by looking at her or testing her. However, we can observe some representative behaviors, such as being on time, working well with others, performing tasks in an excellent manner, etc.

Whenever possible, criterion-referenced performance tests should be used. Having a learner perform the task under realistic conditions is normally a better indicator of a person's ability to perform the task under actual working conditions than a test that asks them questions about the performance.

If a performance test is not possible, then a criterion-referenced written test should be used to measure the learners' achievements against the objectives. The test items should determine the learner's acquisition of the KSAs required to perform the task. Since a written measuring device samples only a portion of the population of behaviors, the sample must be representative of the behaviors associated with the task. Since it must be representative, it must also be comprehensive.

eLearning and Drag and Drop

While testing learners with an elearning platform uses many of the same techniques as classrooms, elearning merits a few words as the tests can differ. One of these differences in that the tests can offer immediate feedback. For example, one of the more popular methods is using what is known as Drag and Drop — clicking on a virtual object and dragging it onto another virtual object. While some Drag and Drop tests score the results at the end of the game, others provide immediate feedback by allowing only the correct object to be dropped in its answer. This allows the learners to practice their skills and knowledge before moving on to a test instrument that is scored.

This Instructional System Design Game is an example of drag and drop. Clicking on the link will bring the game up in a small window. To see how it works and to get the template, click on the “Licensed by URL” on the bottom of the screen.

Performance Tests

A performance test allows the learner to demonstrate a skill that has been instructed in a training program. Performance tests are also criterion-referenced in that they require the learner to demonstrate the required behavior stated in the objective. For example, the learning objective “Calculate the exact price on a sales using a cash register” could be tested by having the learners ring up the total with a given number of sales items by using a cash register. The evaluator should have a check sheet to go by that lists all the performance steps that the learner must perform to pass the test. If the standard is met, then the learner passes. If any of the steps are missed or performed incorrectly, then the learner should be given additional practice and coaching and then retested.

There are three critical factors in a well-conceived performance test:

- The learner must know what behaviors (actions) are required in order to pass the test. This is accomplished by providing adequate practice and coaching sessions throughout the learning sessions. Prior to the performance evaluation, the steps required for a successful completion of the test must be understood by the learner.

- The necessary equipment and scenario must be ready and in good working condition prior to the test. This is accomplished by prior planning and a commitment by the leaders of the organization to provide the necessary resources.

- The evaluator must know what behaviors are to be looked for and how they are rated. The evaluator must know each step of the task to look for and the parameters for the successful completion of each step.

Written Tests

A written test may contain any of these types of questions:

- Open-ended question: This is a question with an unlimited answer. The question is followed by an ample blank space for the response.

- Checklist: This question lists items and directs the learner to check those that apply to the situation.

- Two-Way question: This type of question has alternate responses, such as yes/no or true/false.

- Multiple-Choice question: this gives several choices, and the learner is asked to select the most correct one.

- Ranking Scales: This type of question requires the learner to rank a list of items.

- Essay: Requires an answer in a sentence, paragraph, or short composition. The chief criticism leveled at essay questions is of the wide variance in which instructors grade these. A chief criticism of the other types of questions (multiple choice, true/false, etc.) is that they emphasize isolated bits of information and thus measure a learner's ability to recognize the right answer, but not the ability to recall or reproduce the right answer. In spite of this criticism, learners who score high on these types of questions also do well on essay examinations. Thus, the two kinds of tests appear to measure the same type of competencies.

Multiple Choice

The most commonly used question in training environments is the multiple-choice question. Each question is called a test item. The parts of the test item are labeled as:

|

1. This part of the test item is called the "stem". _____a. The incorrect choices are called "distracters". _____*. Correct response _____c. Distracter _____d. Distracter |

When writing multiple-choice questions follow these points to build a well constructed test instrument:

- The stem should present the problem clearly.

- Only one correct answer should be included.

- Distracters should be plausible.

- 'All the above' should be used sparingly. If used, an equal number of 'All the above' should be correct and incorrect (distracters). Do not use 'None of the above'.

- Each item should test one central idea or principle. This enables the learner to concentrate on answering the question instead of dissecting the question. It also allows the instructor to determine exactly which principles were not comprehended by the learner.

- The distracters and answer for a question should be listed in series. That is, high to low, low to high, alphabetical, longest to shortest, like vs. unlike, function, etc.

- Often, test items can be improved by modifying the stem. In the two examples below, the stem has been modified to eliminate duplicate words in the distracters. This makes the question easier to read.

Poor example:

|

1. The written objectives statement should _____a. reflect the identified needs of the learner and developer _____b. reflect the identified needs of the learner and organization _____c. reflect the identified needs of the developer and organization _____d. reflect the identified needs of the learner and instructor |

Better example:

|

1. The written objectives statement should reflect the identified needs of the _____a. learner and developer _____b. learner and organization _____c. developer and organization _____d. learner and instructor |

The distracters should be believable and in sequence:

Poor example:

|

2. A student who earns a score of 60, 70, 75, 95, and 95 would have a mean score of _____a. 79 _____b. 930 _____c. 3 _____d. 105 |

In the above example, all the distracters were simply chosen at random. A better example with believable distracters and numbers in sequence would be:

|

2. A student who earns a score of 60, 70, 75, 95, and 95 would have a mean score of _____a. 5 (total number of scores) _____b. 75 (medium) _____c. 79 (correct response) _____d. 95 (mode) (also notice that the choices are in numerical order) |

If an item analysis is performed on the above example, we might discover that none of the learners choose the first distracter, (a). In our search for a better distracter, the instructor informs us that some of the learners are entering the class with the myth that the mean is found by using the incorrect formula shown on the left below, instead of the correct formula shown to its right:

That is, they are adding a 1 to the total number of scores. We could change the first distracter (a) as follows:

|

2. A student who earns a score of 60, 70, 75, 95, and 95

would have a mean score of _____a. 65 (answer if incorrect formula is used) _____b. 75 (medium) _____c. 79 *correct _____d. 95 (mode) |

Although a new item analysis might show that the learners are not choosing the new distracter because the myth is adequately being dispelled by the instructor, it could still be left in as a distracter to let the instructor know if the myth is properly being dispelled.

If a plausible distracter cannot be found, then go with a fewer number of distracters. Although four choices are considered the standard for multiple-choice questions as they only allow a 25% chance of the learner guessing the correct answer, go with three if another believable distracter cannot be constructed. A distracter should never be used just to provide four choices as it wastes the learner's time reading through the possible choices.

Also, notice that the layout of the above example makes an excellent score sheet for the instructor as it gives all the required information for a full review of the evaluation.

True and False

True and false questions provide an adequate method for testing learners when three or more distracters cannot be constructed for a multiple-choice question or to break up the monopoly of a long test.

Multiple-choice questions are generally preferable as a learner who does not know the answer has a 25 percent chance of correctly guessing a question with four choices or approximately 33 percent for a question with three choices. With a true-false questions their odds get better with a 50 percent chance of guessing the correct answer.

True and false questions are constructed as follows:

|

__ T __ F__ 1. There should always be twice the number of true statements verses false statements in a True/False test. __ T__ F __ 2. Double negative statements should not be used in True/False test statements. |

Question 1 is false as there should be approximately an equal number of true and false items. Question 2 is true for any type of question. Other pointers when using True and False tests are:

- Use definite and precise meanings in the statements.

- Do not lift statements directly from books or notes.

- Distribute the true and false statements randomly in the test instrument.

Open Ended Questions

Although open-ended questions provide a superior method of testing than multiple-choice or true-false questions as they allow little or no guessing, they take longer to construct and are more difficult to grade. Open-ended questions are constructed as follows:

|

1. In what phase of the Instruction Skills Development model

are tests constructed? ____________________ (This is an example of a direct question) 2. Open-ended test statements should not begin with a _________________________ . (This is an example of an incomplete statement.) |

The blank should be placed near the end of the sentence:

Poor example:

| 3. ____________________ is the formula for computing the mean. |

Better example:

| 3. The formula for computing the mean is _____________________. |

Placing the blank at or near the end of a statement allows the learner to concentrate on the intent of the statement. Also, the overuse of blanks tends to create ambiguity. For example:

Poor example:

| 4. _________________ theory was developed in opposition to the _____________ theory of _______________________ by ___________________ and ____________________. |

Better example:

| 4. The Gestalt theory was developed in opposition to the ____________________ theory of psychology by ______________________ and _____________________________. |

Attitude Surveys

Attitude surveys measure the results of a training program, organization, or selected individuals. The goal might be to change the entire organization (Organizational Development) or measure a learner's attitude in a specific area. Since attitudes are defined as latent constructs and are not observable in themselves, the developer must identify some sort of behavior that is representative of the display of the attitude in question. This behavior can then be measured as an index of the attitude construct.

Often, the survey must be administered several times as employees' attitude will vary over time. Before and after measurements should be taken to show the changes in attitude. Generally, a survey is conducted one or more times to assess the attitude in a given area, then a program is undertaken to change the employee's attitudes. After the program is completed, the survey is again administered to test its effectiveness.

A survey example can be found at Job Survey.

One test is worth a thousand expert opinions. — Bill Nye the Science Guy

Next Steps

Go to the next section: Identify Learning Steps

Read about Item Analysis (testing the test)

Return to the Table of Contents

Pages in the Design Phase

- Introduction to the Design Phase

- Develop Objectives

- Develop Tests

- Identify Learning Steps

- List Entry Behaviors

- Sequence and Structure

- Learning Activity -

References

Brown, F.G. (1971). Measurement and Evaluation. Itasca, Ill: F. E. Peacock.

Krathwohl, D.R., Bloom, B.S., Mesia, B.B. (1964). Taxonomy of Educational Objectives: Handbook 1: Cognitive Domain. New York: David McKay.

Wolansky, W.D. (1985). Evaluating Student Performance in Vocational Education. Ames, Iowa: Iowa State University Press.